Microservices Testing: Strategies for Balancing Speed and Reliability

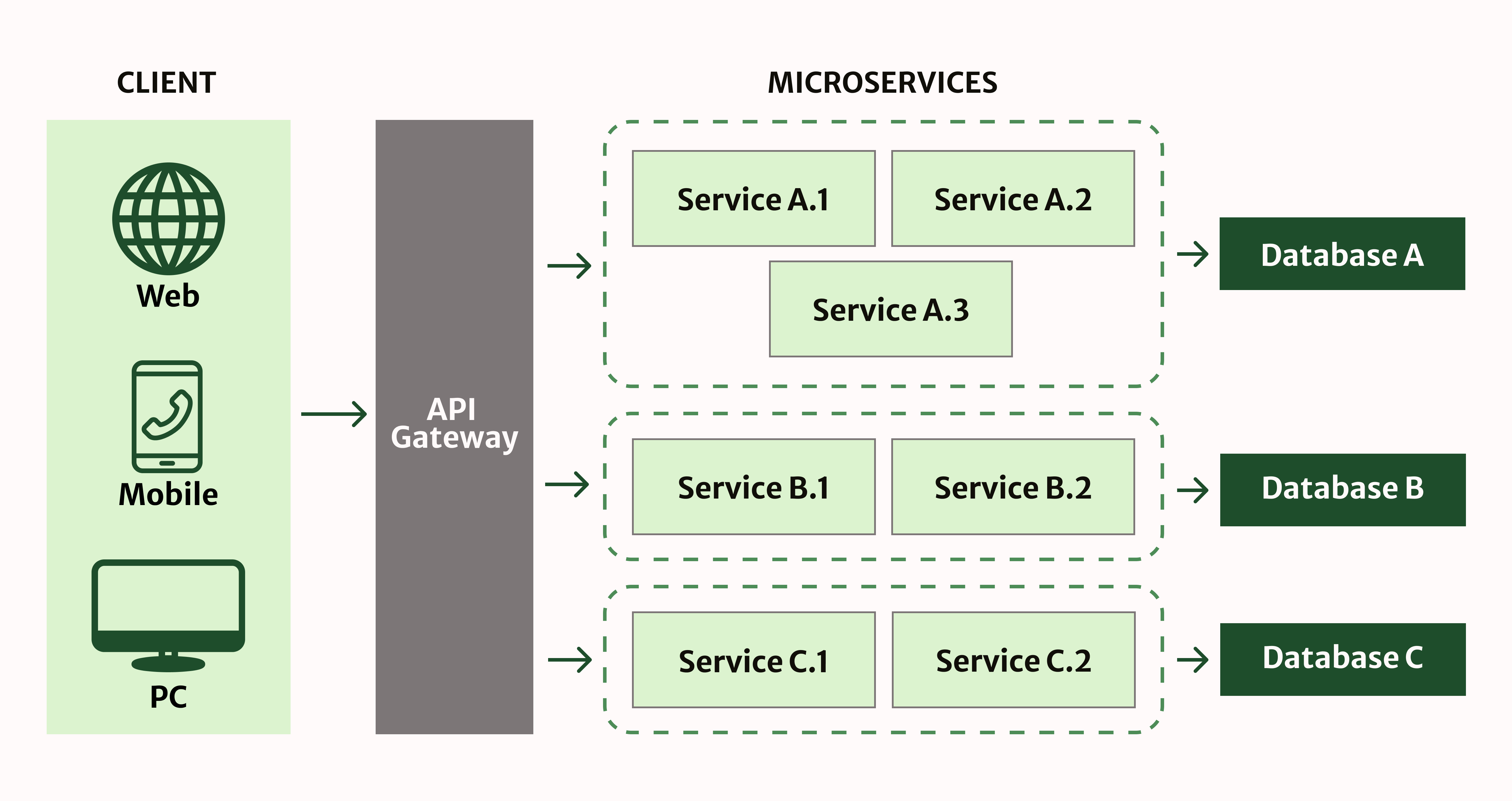

Microservices architecture breaks down monolithic applications into independent services. Each service is responsible for a specific business function. Interaction occurs via API, and deployment is performed separately.

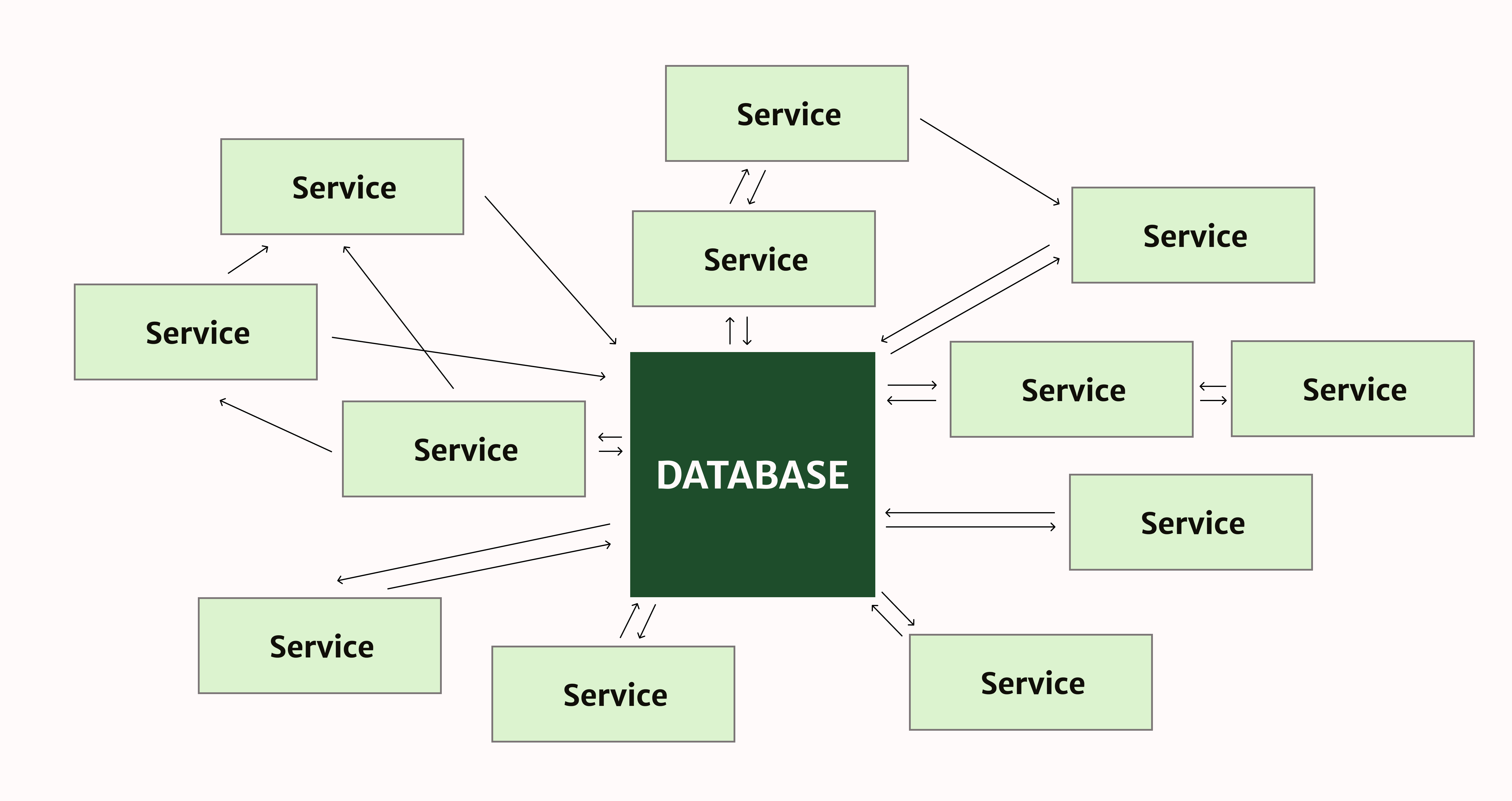

However, traditional testing strategies fail in a distributed environment. The problem of “Death Star” architecture arises with complex dependencies. A failed service breaks dozens of other system components. As a result, the business suffers financial losses.

The waterfall testing model lags behind continuous deployments. Teams release updates several times a day. Manual testing creates bottlenecks. The entire process slows down. Quality suffers with this approach. Automation is becoming a necessity for modern development teams.

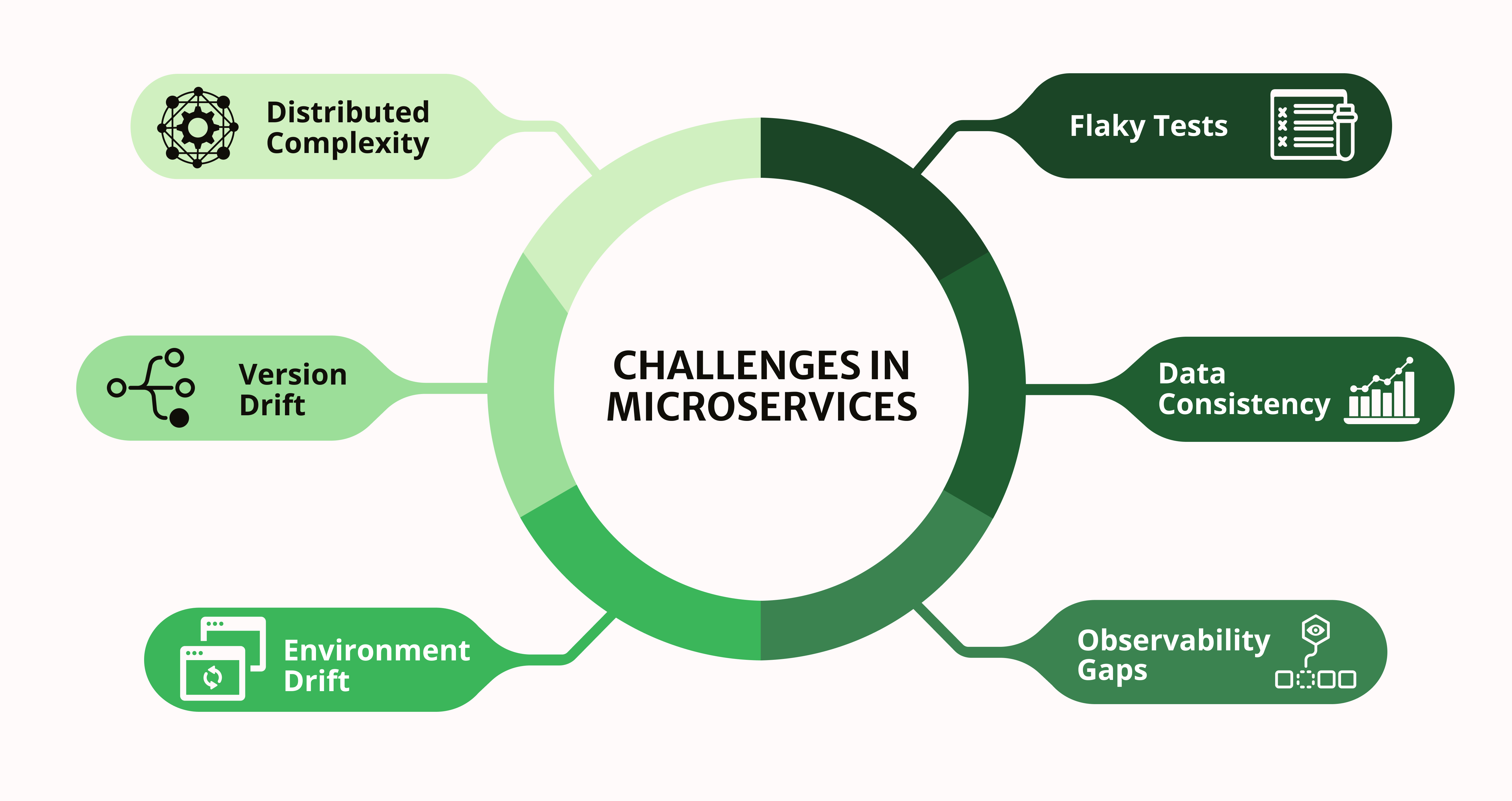

Key Challenges in Microservices Architecture

Testing in microservices presents unique challenges due to the distributed nature of the architecture. Traditional approaches to testing ignore the complexity of inter-service interactions. Challenges arise at every stage of the software development cycle.

Distributed Architecture and Dependencies

Services depend on external APIs to function correctly. Network delays create unpredictable test results. Dependencies multiply complexity exponentially. Each microservice can use different technology stacks. Teams work with other programming languages simultaneously. Synchronizing changes between dependent services requires careful coordination of efforts.

Frequent Deployments and Version Inconsistencies

Teams deploy services independently according to different release schedules. Version conflicts regularly disrupt compatibility between system components. Development teams release new features without synchronizing with others. An instance of one service works with an outdated version of another service. Backward compatibility requires special attention with every API change. Semantic versioning helps manage the evolution of interfaces between system services.

Test Environment Instability

Supporting consistent test environments requires significant resources from the DevOps team. Test infrastructure often lags behind production in capacity and configuration. Software engineers encounter problems that cannot be reproduced locally. Environment drift creates a false sense of security for development teams. Containerization via Docker helps standardize environments for testing applications.

Unreliable Tests and False Positive Results

Integration tests for microservices regularly produce inconsistent results. Network timeouts cause false positives in the testing system. Flaky tests completely undermine confidence in the automated test suite. Developers begin to ignore test results over time. Retry mechanisms and proper timeout configuration reduce the number of false positives.

Data Consistency and Test Isolation

Shared databases constantly cause conflicts between parallel tests. Eventual consistency makes writing deterministic tests significantly more difficult. QA engineers take into account time delays in data synchronization between services. Race conditions become a frequent problem in distributed testing systems.

Observability and Debugging Issues

Tracing errors across multiple services takes up a lot of the team’s time. Logs are scattered across different systems and recording formats. Observability tools are essential for QA teams to work effectively. Without centralized monitoring, debugging becomes an absolute nightmare for engineers.

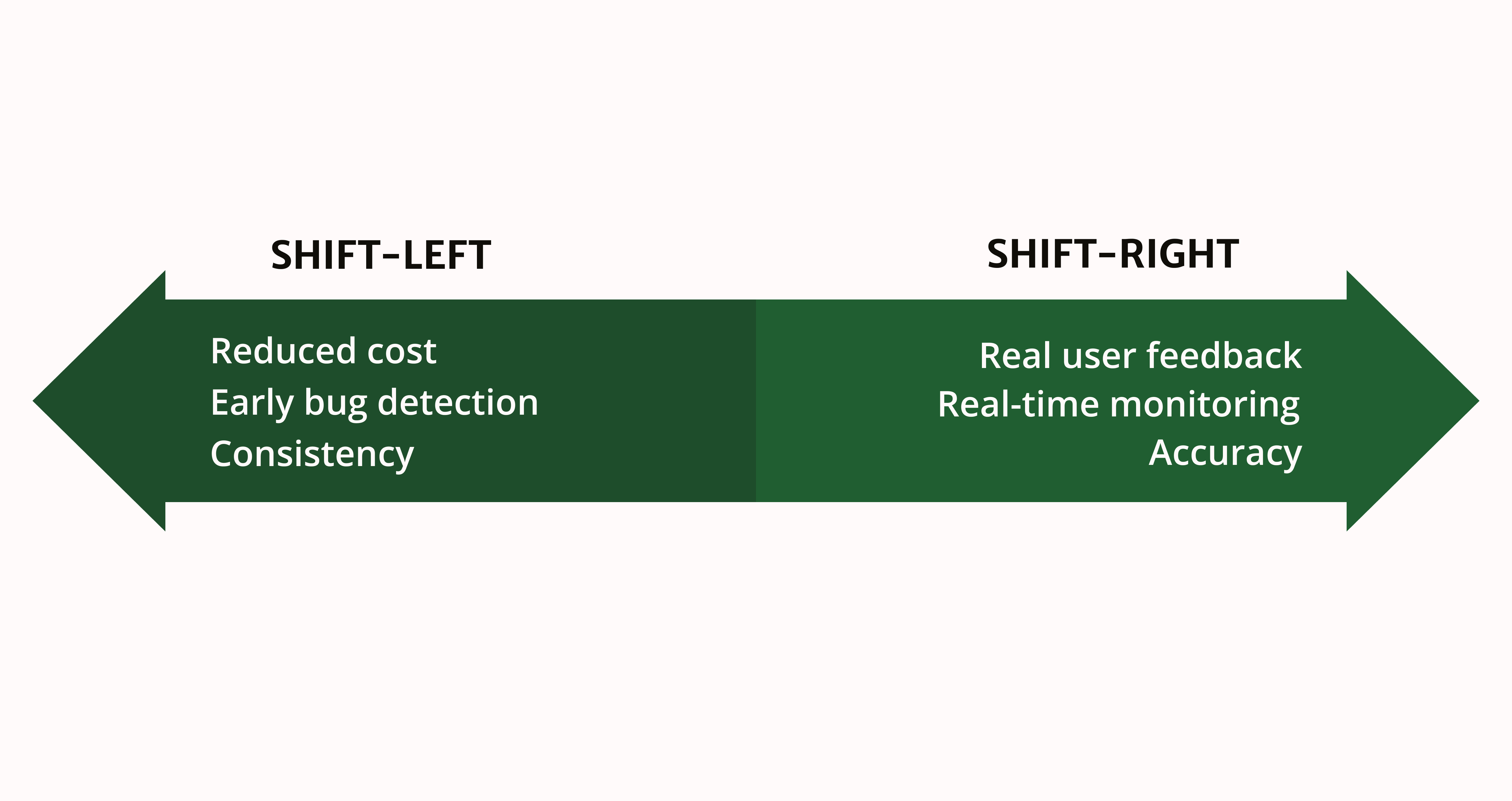

Basic Strategy: Shift-Left and Shift-Right

The balance between speed and reliability requires testing at different stages. A two-pronged approach effectively addresses both development and production issues simultaneously. Combining strategies ensures maximum risk coverage in the system.

Shift-Left (Speed)

Identifying bugs during development through automated service testing. Developers run tests locally before committing code to the repository. Contract testing prevents breaking changes between services in the early stages.

The cost of fixing errors is minimal at this application development stage. Static code analysis and linting identify potential problems before launch. Pre-commit hooks automatically validate code quality before sending changes. This ensures consistent standards across the entire development team.

Shift-Right (Reliability)

Checking behavior in a production environment with real user data. Chaos engineering identifies weaknesses under real load in a microservices architecture. Production monitoring catches problems that test environments miss entirely. Real users become part of the application quality validation process.

Implement distributed tracing to track requests across all system services. Prometheus and Grafana provide real-time visibility into the status of microservices testing. Alerts are triggered instantly when metrics deviate from regular operation. Monitoring in production reveals problems that are invisible in test environments. Synthetic monitoring simulates user scenarios to identify issues proactively.

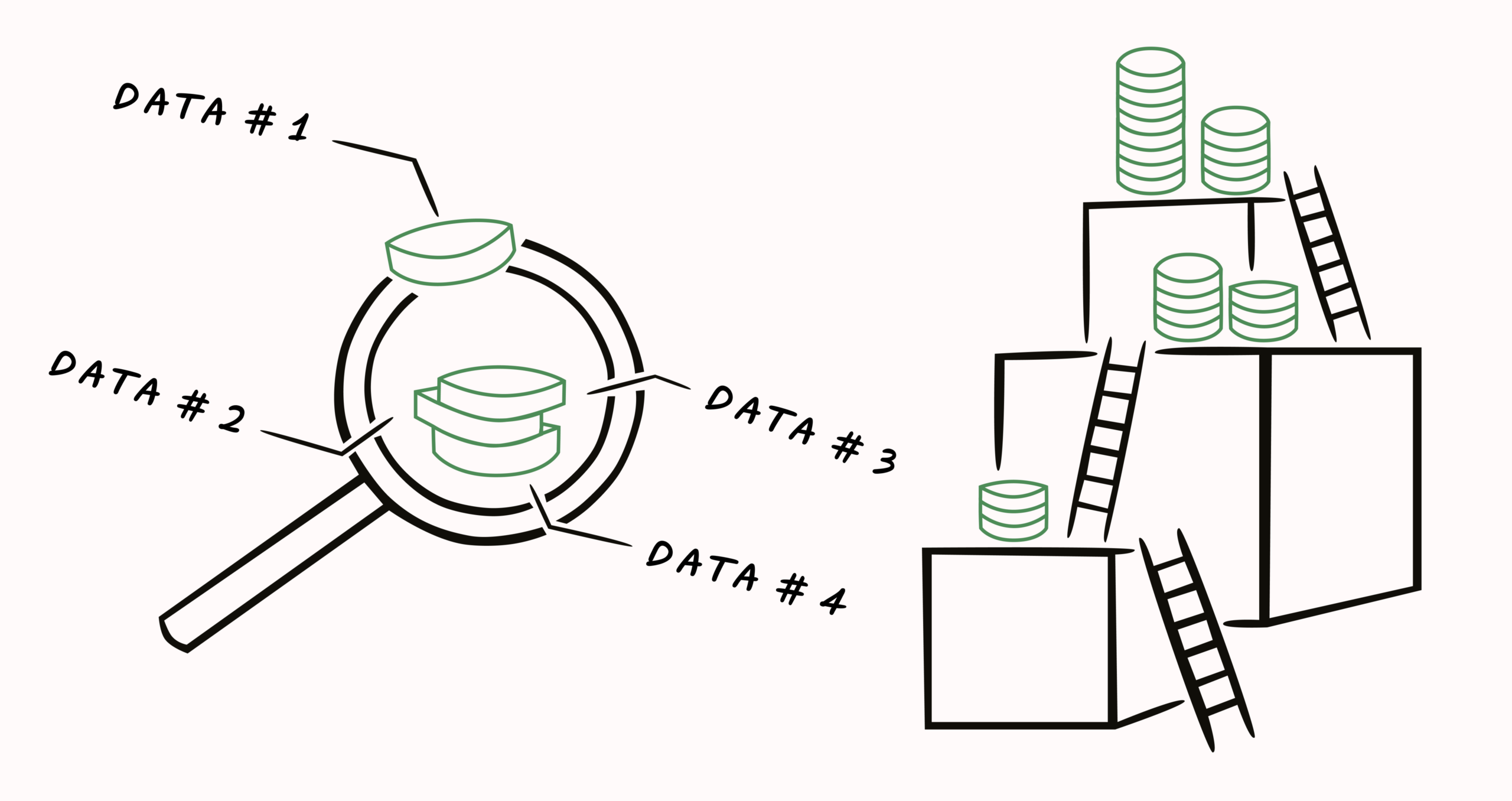

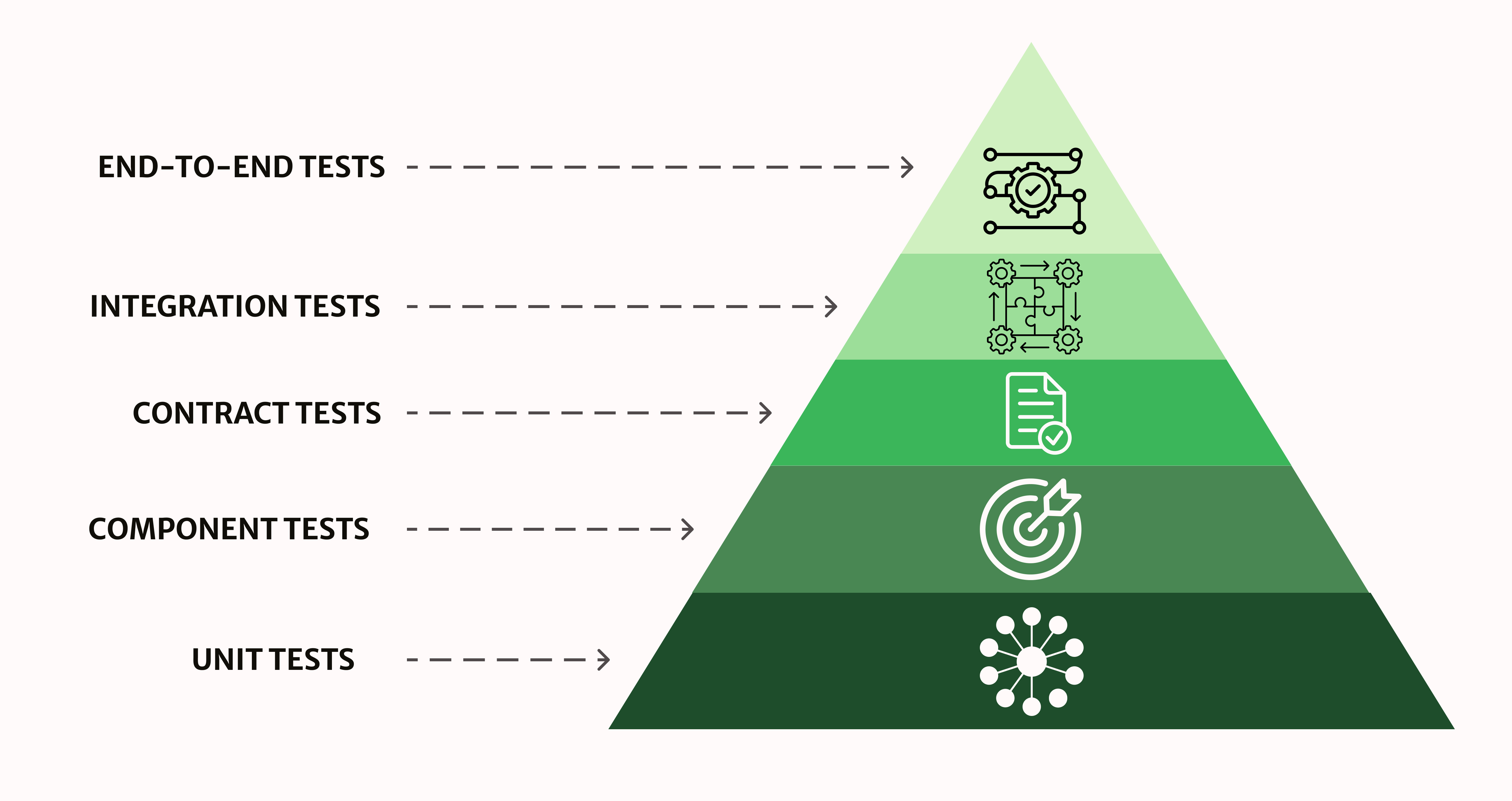

Testing Strategies for Microservices: Rethinking the Pyramid

The classic testing pyramid needs to be seriously adapted for microservices. Distributed architecture requires a different balance between testing levels. The proportions shift significantly in favor of contract testing of services.

Why the Classic Pyramid Requires Adaptation

Microservices architecture testing requires different proportions between system testing levels. Network failures become more frequent than logical code errors. API becomes the main interface for interaction between teams in microservices. A monolithic approach to testing does not work effectively here at all.

Recommended Distribution of Tests for Microservices

The correct strategy includes several levels with different testing objectives:

- Unit testing. Tests individual functions in complete isolation from external dependencies. These tests run fastest and are cheapest to maintain. However, integration issues remain completely invisible to developers. Unit tests should always form the basis of the microservices testing pyramid.

- Component testing. Completely isolates one service from all other system components. External dependencies are mocked using test doubles or stubs. This approach tests the functionality of the service without actual network API calls. Isolation guarantees the stability of test results under any operating conditions.

- Contract testing. Consumer-driven contracts explicitly define interaction agreements via API. Pact automatically generates contracts from service consumer expectations each time. Providers validate these contracts continuously during the application build process. The strategy prevents breaking changes without costly integration tests entirely.

- Integration tests. Verify the correctness of service interactions over a real data network. Focus on critical paths while comprehensive coverage takes a back seat. The number of integration tests should be minimal to save time. Each test covers only key component interaction scenarios.

- End-to-end testing. Validates complete user scenarios across all services of a distributed system. These tests are slow, expensive, and fragile by nature. E2E is limited to happy path scenarios of the company’s core business processes. Supporting such tests requires significant resources from the development team on an ongoing basis.

Services evolve independently while maintaining compatibility with other components through this approach.

How Excessive Reliance on E2E Slows Down Teams

Excessive E2E testing is becoming a bottleneck in the CI/CD pipeline. Full system deployment takes 30-60 minutes for each run. Slow feedback loops critically reduce the productivity of development teams.

The fragility of E2E tests creates daily false positives in teams’ work. Teams spend more time fixing flaky tests than developing new features. The right balance in the testing pyramid solves this problem effectively.

Advanced Strategies for Improving Reliability

Modern approaches go beyond traditional microservices test automation. These techniques help minimize risks and ensure high system availability. A proactive approach prevents production incidents.

Service Virtualization

Virtual services simulate responses based on recorded interactions between components. This technique unlocks development when dependencies on external partners are unavailable.

Service virtualization ensures quality without additional financial costs. Teams work in parallel without blocking each other during the development process. Mocks reproduce edge cases that are difficult to create in real systems.

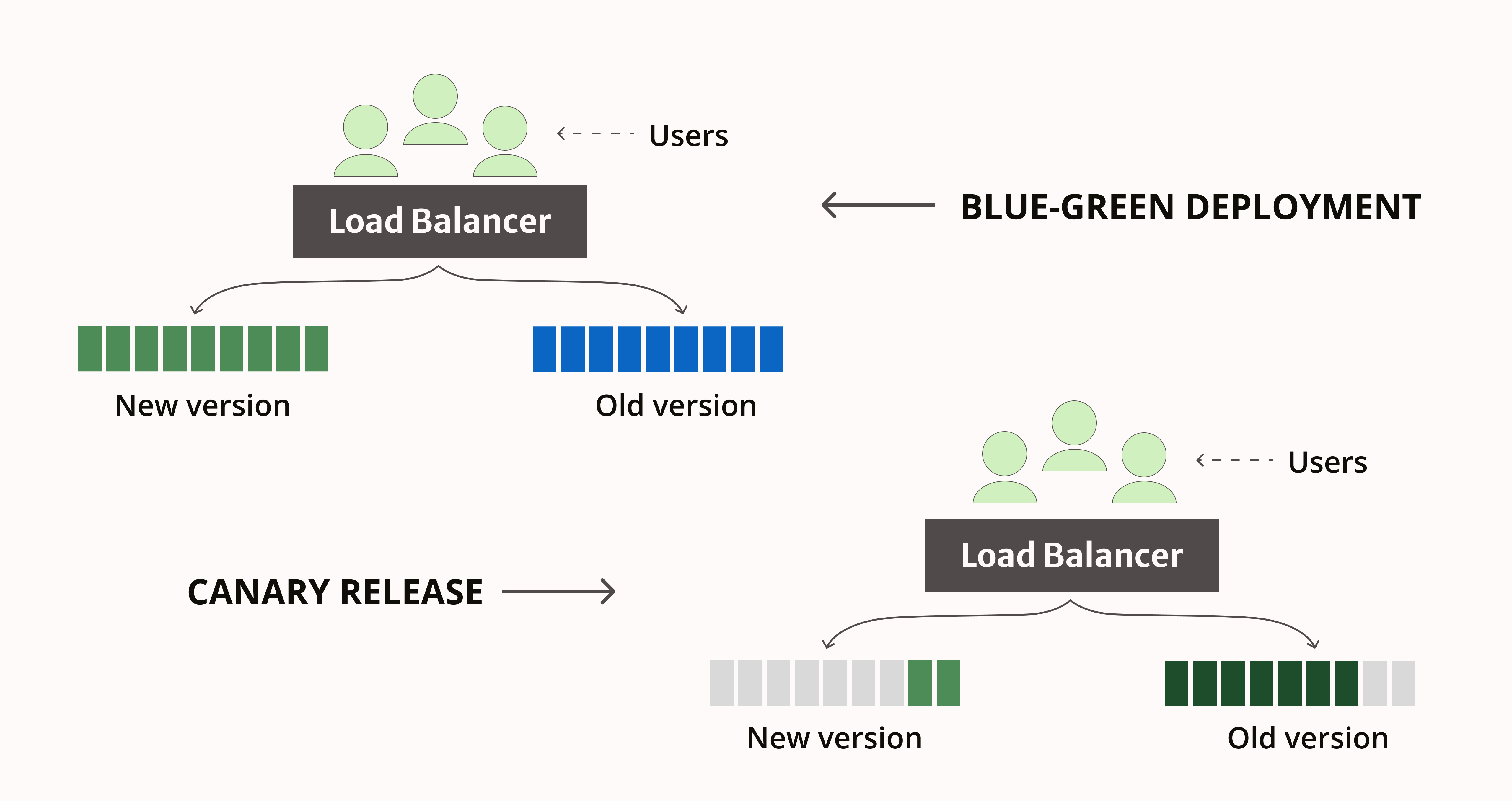

Canary Releases and Blue-Green Deployment

Canary releases make new versions available to a small percentage of users first. Real-time monitoring of error rates and performance metrics. Blue-green deployment supports two identical production environments running simultaneously.

Strategies minimize the impact of new software release failures. Rollbacks occur instantly when problems are detected in a new version. Feature flags allow you to control the enablement of functionality without redeploying services.

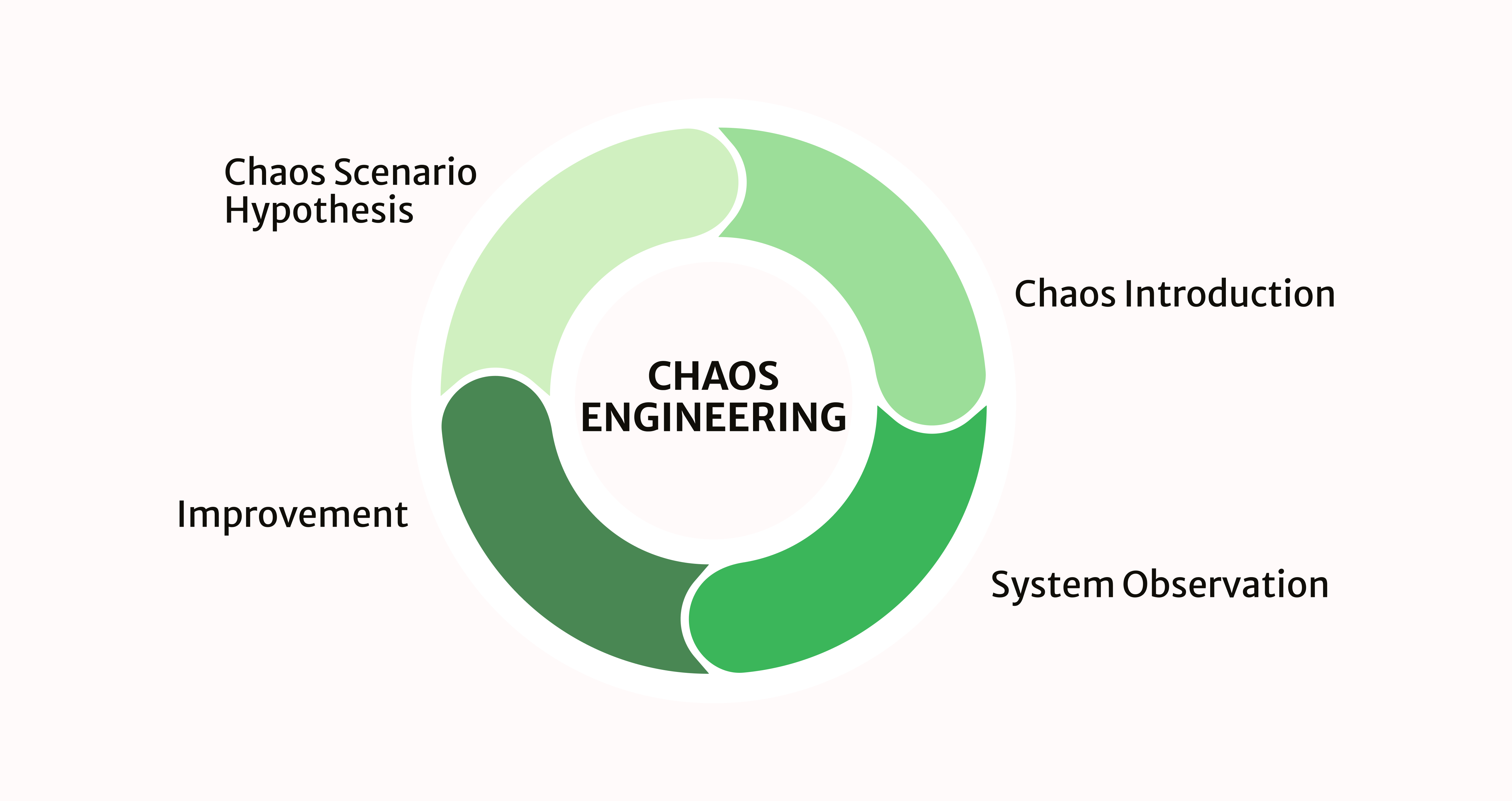

Chaos Engineering

Intentionally introduce failures into the system to continuously test the resilience of the architecture. Kill random service instances during peak load hours.

Chaos engineering helps build more resilient applications gradually and methodically. Netflix has been actively using this practice in its system infrastructure for many years. The system must remain operational regardless of any failures of individual components. Regular chaos experiments identify weaknesses before real incidents occur.

Microservices Testing Tools: A Set of QA Tools

Choosing the right tools speeds up the microservices test automation process significantly. Each tool serves specific purposes in the overall testing strategy. Integrating tools creates a seamless workflow for application development teams.

| Test Type | Recommended Tools |

| Contract Testing | Pact, Spring Cloud Contract |

| API Testing | Postman, RestAssured, Tavern |

| Performance | JMeter, k6 |

| Observability | Prometheus, Grafana, Jaeger (Tracing) |

White Test Lab uses a combination of these tools for a comprehensive QA process. We help teams choose the optimal set of tools for the specifics of their project. The right toolchain is critical to the success of automating the testing of microservices in a system.

Conclusion

Reliability is a continuous process throughout the entire software development lifecycle. Automated safeguards prevent problems before they reach the production environment. In microservice architecture, the best QA specialists build systems for automatic error detection. Quality is built into the process rather than checked at the end of the cycle.

White Test Lab specializes in ensuring the quality of microservice applications of varying complexity. Our experts design comprehensive testing strategies for each client’s unique architecture. We help teams achieve speed and reliability simultaneously and effectively. Our experience spans various industries and development technology stacks.

Having trouble scaling your microservices automated testing suite? Contact our quality assurance consultants for a customized system audit. We will identify gaps in your testing and recommend practical improvements to your approach. The initial consultation will help you determine the priority areas for QA process development.