Data Warehouse Testing

Many companies take data from a variety of systems and keep it in a data warehouse. This is done so the information can be used for reports and analysis. Teams depend on this data to comprehend what is occurring within the business, making decisions based on actual figures.

However, if the information is not correct, then also the reports will be incorrect. Missing data, wrong transformations or numbers that do not match can quickly result in incorrect decisions. This is why it becomes very crucial to test a data warehouse.

Different from the usual application testing, data warehouse testing is centered on the data itself. It looks at how this data shifts between systems and checks if the end outcomes are correct and steady.

In this article, we will discuss the major kinds of data warehouse testing. We will also explain how the process of testing works and what difficulties teams might face.

What Is Data Warehouse Testing?

Testing a data warehouse involves ensuring that the data in your warehouse is meaningful and accurate. It verifies if the data has been correctly extracted from source systems, transformed appropriately, stored accurately, and displayed properly in reports.

Different from application testing, you are not pressing buttons or observing UI behavior. Usually, you compare datasets, execute queries, and confirm that numbers correspond across systems. If the sales report indicates 14,548 orders, it is necessary to ensure this number originates from the source data.

In analytics and business intelligence systems, the main goal is simple: make sure people can trust the data.

Good DWH testing helps ensure that:

- Data is loaded correctly from source systems

- Transformations and calculations work as expected

- No data disappears along the way

- Duplicate or inconsistent records are detected

- Reports show the right numbers

If these inspections are not done, issues might appear in the future.

A data warehouse is not a single system. It is an arrangement of systems where the data shifts step by step (data warehousing). Usually, testing follows this same route. All begins with the systems where data is first produced. These could involve:

- Application databases

- CRM or ERP systems

- Third-party services and APIs

- Logs or event tracking systems

In this phase, testing is mainly about confirming that the correct data is being extracted. If the source data is incomplete or wrongly mapped, it cannot be corrected by the rest of the process.

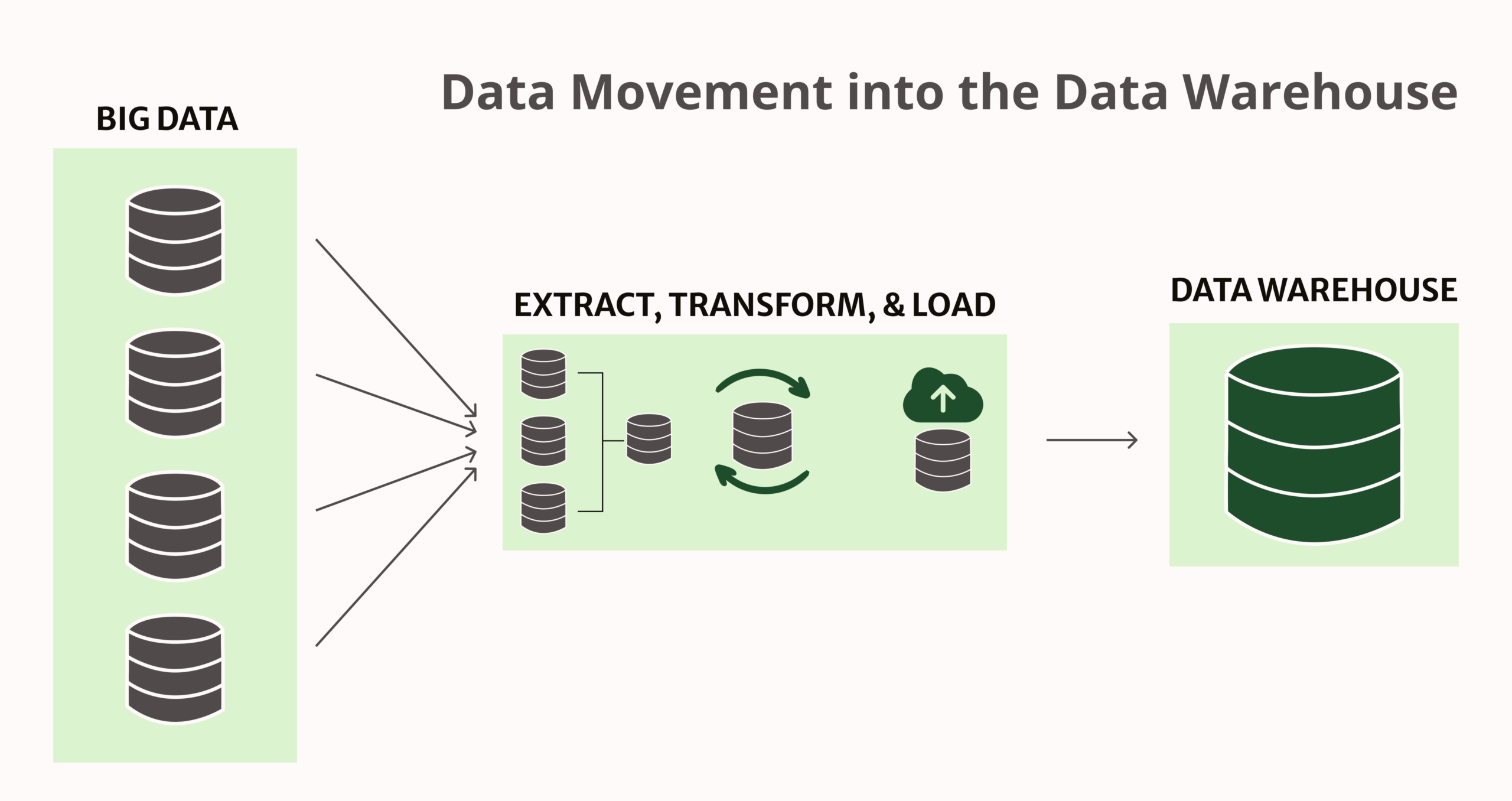

Once data is extracted, it moves through what are often known as ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) processes. These pipelines transfer the data from source systems to the warehouse and make it ready for analysis.

During this stage, data is typically:

- Cleaned and standardized

- Filtered or enriched

- Joined with other datasets

- Aggregated or calculated

Many data issues appear at this step.

Next, the data warehouse is where the processed data is stored and organized for analysis.

Data warehouse testing here focuses on questions like:

- Does the warehouse contain all expected data?

- Are relationships between tables correct?

- Are totals consistent with the source data?

- Is historical data preserved properly?

If something is wrong at this level, every report built on top of the warehouse will reflect the same problem.

And at the top are BI and reporting tools – dashboards and reports that business users rely on.

This is usually where data problems become visible first. Data warehouse testing at this level includes:

- Comparing report numbers with warehouse data

- Verifying filters and calculations

- Checking that reports use the correct datasets

From a business perspective, reports are the final product, so they need to be accurate.

Why Data Warehouse Testing Matters

Majority of firms consider the data warehouse as their truth source. It is a foundation for reports, dashboards, and predictions, with people normally believing that the information is accurate. However, if there exists any discrepancy in the data, then decisions derived from it will also be incorrect. Data warehouse testing ensures that the figures people observe are truly reflective of what is going on in the business.

Better Decisions Start with Accurate Data

Often, important business choices depend on a few crucial metrics such as revenue or conversion rates. These figures typically originate straight from the data warehouse. If this information is not correct, teams could make wrong judgments about performance or concentrate on inappropriate priorities.

Preventing Data Issues in Data Pipelines

When data travels from the source systems to the warehouse, it goes through many processing stages and transformations. Each stage presents a possibility for something to go wrong. A minor error in transformation logic, a failed load of data, or an unnoticed change in schema can impact vast quantities of data.

Keeping Data Consistent Across Systems

A quick way to lose trust in a data warehouse is when the figures between systems do not correspond. The identical measure could display varying values on a dashboard, report, and source database. When this occurs, teams begin doubting which version is accurate.

Compliance and Regulatory Requirements

In certain sectors, having accurate data is not only a technical matter but also a legal necessity. Companies might have to prove that their information is comprehensive, correct, and able to be tracked. Wrong reports or absent records can pose compliance issues and cause difficulties when audited.

The Impact of Poor Data Quality

Data of poor quality can cause constant problems within a company. Staff may have to spend time looking into numbers that don’t match, fixing data by hand, or making reports again from scratch. As the days go by, employees might lose trust in the entire data storage system and begin maintaining their own versions of this information using spreadsheets or different tools separately.

When such an event occurs, it becomes more difficult to maintain consistency. Good testing of the data warehouse can help avoid these scenarios by ensuring that the data is stable and reliable from the beginning.

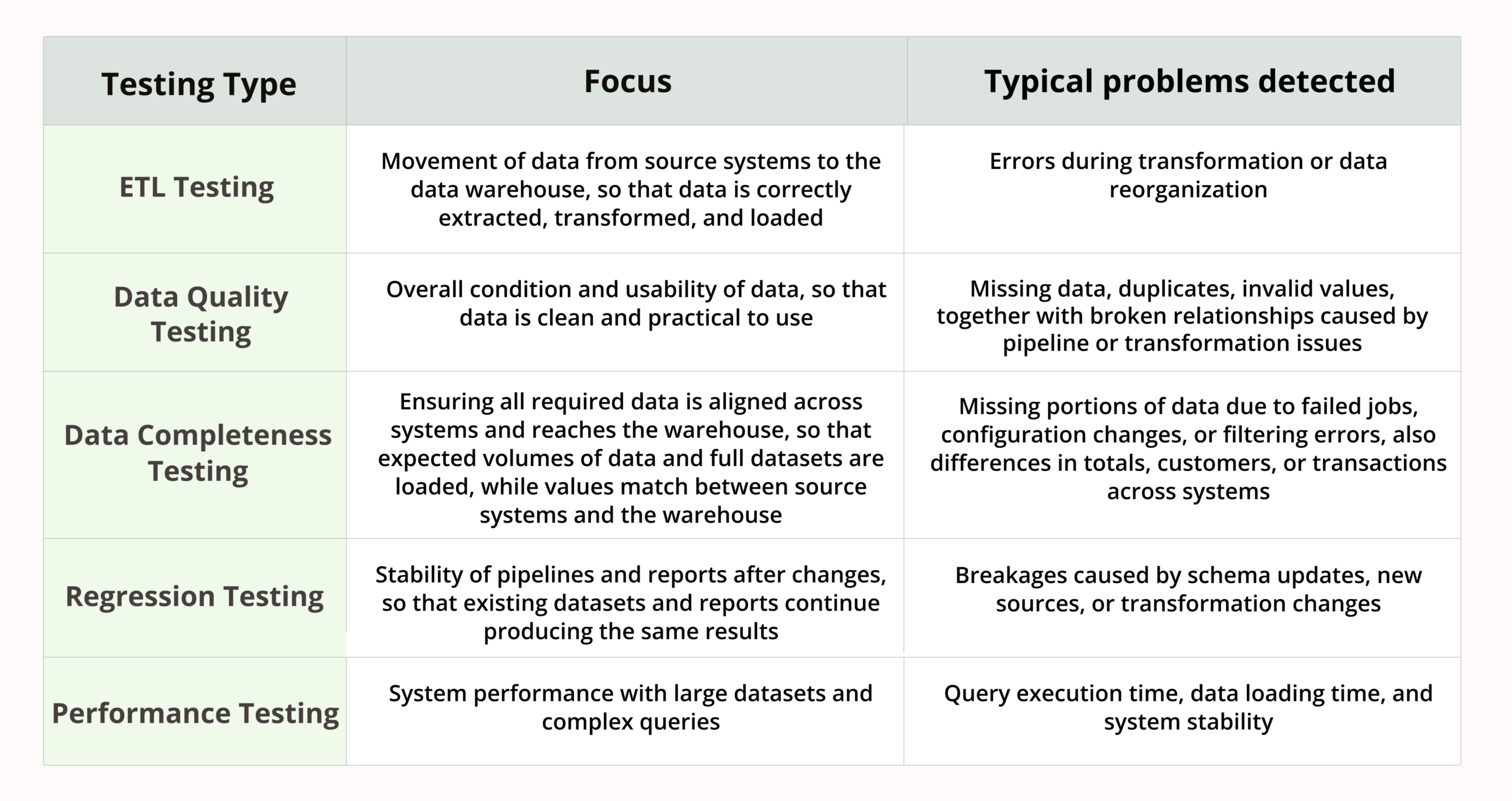

Types of Data Warehouse Testing

Data warehouse testing includes many areas as the data passes through various phases before it reaches a report. Majority of projects apply a mix of testing types, each concentrating on diverse parts of the data process.

ETL Testing

ETL testing is about checking the movement of data from source systems to the data warehouse. Most problems typically occur here, as during this process, the data gets transformed and reorganized.

Data Extraction Testing

In this phase, testers make sure that data is properly taken from source systems. The aim is to verify that the anticipated records are retrieved and no crucial information is missed out.

Normally, this includes making comparison of row numbers, inspecting example records, and confirming that the right fields are taken out.

Data Transformation Testing

Testing for transformation confirms that data is handled as per the set rules. For instance, testing could verify that currency conversions are done correctly, statuses correspond accurately, or calculated fields give out the anticipated results. Minor logic errors in this area can influence a huge amount of data; hence it receives substantial focus.

Data Loading Accuracy

After the data is converted, it must be loaded into the warehouse in a proper way. Checking during this phase ensures that the end data corresponds to what was anticipated after transformation.

This involves confirming sums, inspecting main fields, and ensuring that records are placed in the correct tables. If loading does not succeed or only finishes partially, the warehouse may have incomplete or mismatched data.

Data Quality Testing

Data quality testing mainly looks at the state of the data. It is necessary that even if pipelines function on a technical level, the data must be clean and practical to use.

Missing Data

A frequent problem is that data can be missing. This happens when records are possibly overlooked during extraction or they might be discarded during transformations and this occurs without immediate detection by anyone.

Duplicate Records

Duplicates may show up due to various causes like loading data multiple times. As time passes, these duplicates can twist the metrics and make reports not trustworthy. Testing helps detect repeated records and ensures deduplication rules work as expected.

Invalid Values

Occasionally, data seems to load in a proper manner, but it includes values that are not logical, like negative amounts where there is no place for them, dates that are impossible, or wrong status values. Data quality checks help catch these issues before they appear in reports.

Data Integrity Checks

Testing for data integrity confirms that the connections between tables stay accurate. For instance, orders must refer to customers who exist, and transactions should be associated with legitimate accounts. Broken relationships often indicate problems in data pipelines or transformation logic.

Data Completeness Testing

Data completeness testing checks that all required data actually reaches the warehouse. It may seem clear, but lack of data is a very frequent issue in actual projects. A job that didn’t succeed, a change in settings, or an error with filtering can take away some parts of the dataset. Usually, completeness testing checks if the anticipated amounts of data come in and that essential datasets are loaded entirely.

Data Consistency Testing

Data consistency testing makes sure the same data looks the same across different systems. For instance, the overall income in the warehouse must correspond to the total income in the source system. The number of customers and transactions should also be consistent.

Regression Testing

Regression testing checks that new changes don’t break existing data pipelines or reports. Data warehouses are always changing – they add new sources, alter transformations and update schemas. With every change comes the risk that something which previously worked may no longer function properly. Regression testing is useful to confirm that the existing reports and datasets continue giving the same accurate results after any updates.

Performance Testing

Performance testing focuses on checking how well the data warehouse performs when there is a lot of data and complicated queries. Usual inspections involve the time taken for query execution, data loading duration, and how well the system can handle large datasets without any failures.

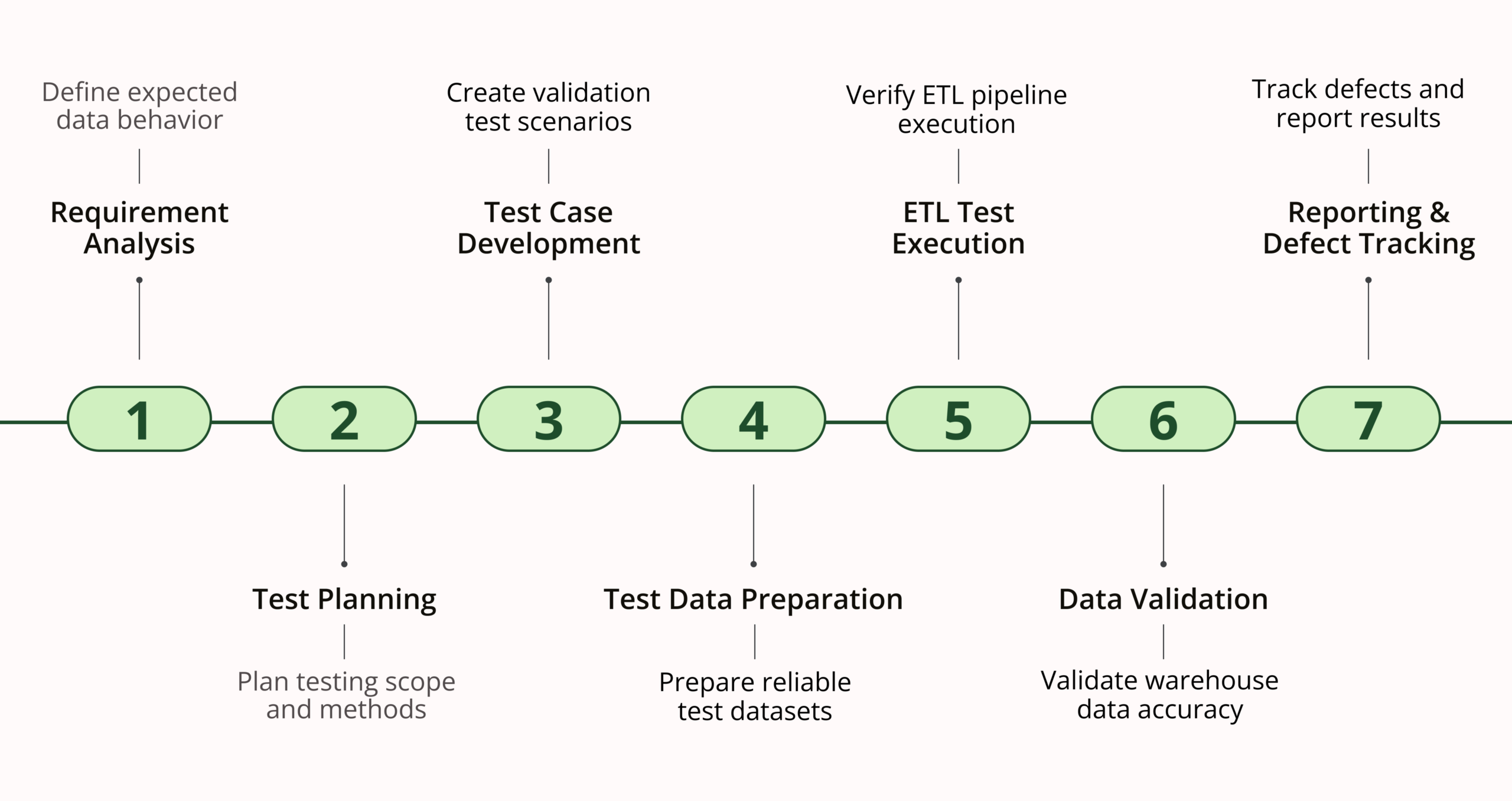

Data Warehouse Testing Process

Requirements Analysis

First, it is necessary to understand what the data warehouse’s function should be. Testers examine requirements, data mappings, transformation rules, and report logic for understanding how the data must act. If there isn’t a clear idea of what results are expected, effective testing becomes nearly impossible.

Test Planning

When the team is preparing for testing, they determine what aspects will undergo tests and in which manner. This involves pinpointing data origins, making decisions about necessary verifications, and selecting suitable methods and tools for testing.

Test Case Development

During this phase, the testers create test scenarios drawing from requirements and data movements. These typically consist of inquiries and validation rules that can verify if the data has been handled correctly.

Test Data Preparation

For testing, we need trustworthy data. Those who test make ready datasets which can be used to confirm transformations and calculations. These include normal situations as well as edge-case scenarios.

ETL Test Execution

When all preparations are complete, we carry out ETL tests to confirm that data is being extracted, transformed, and loaded into the warehouse properly. This stage usually requires executing queries and checking results between different systems.

Data Validation

Once the ETL process is completed, testers ensure to validate the end data in the warehouse. They perform tasks such as checking totals, examining relationships between tables, and making sure that results align with source data along with requirements.

Reporting and Defect Tracking

The last stage involves recording outcomes and monitoring problems. Any inconsistencies or mistakes are noted down as defects and looked into. Clear reports aid teams in understanding what has been examined and what remains to be done.

Key Challenges in Data Warehouse Testing

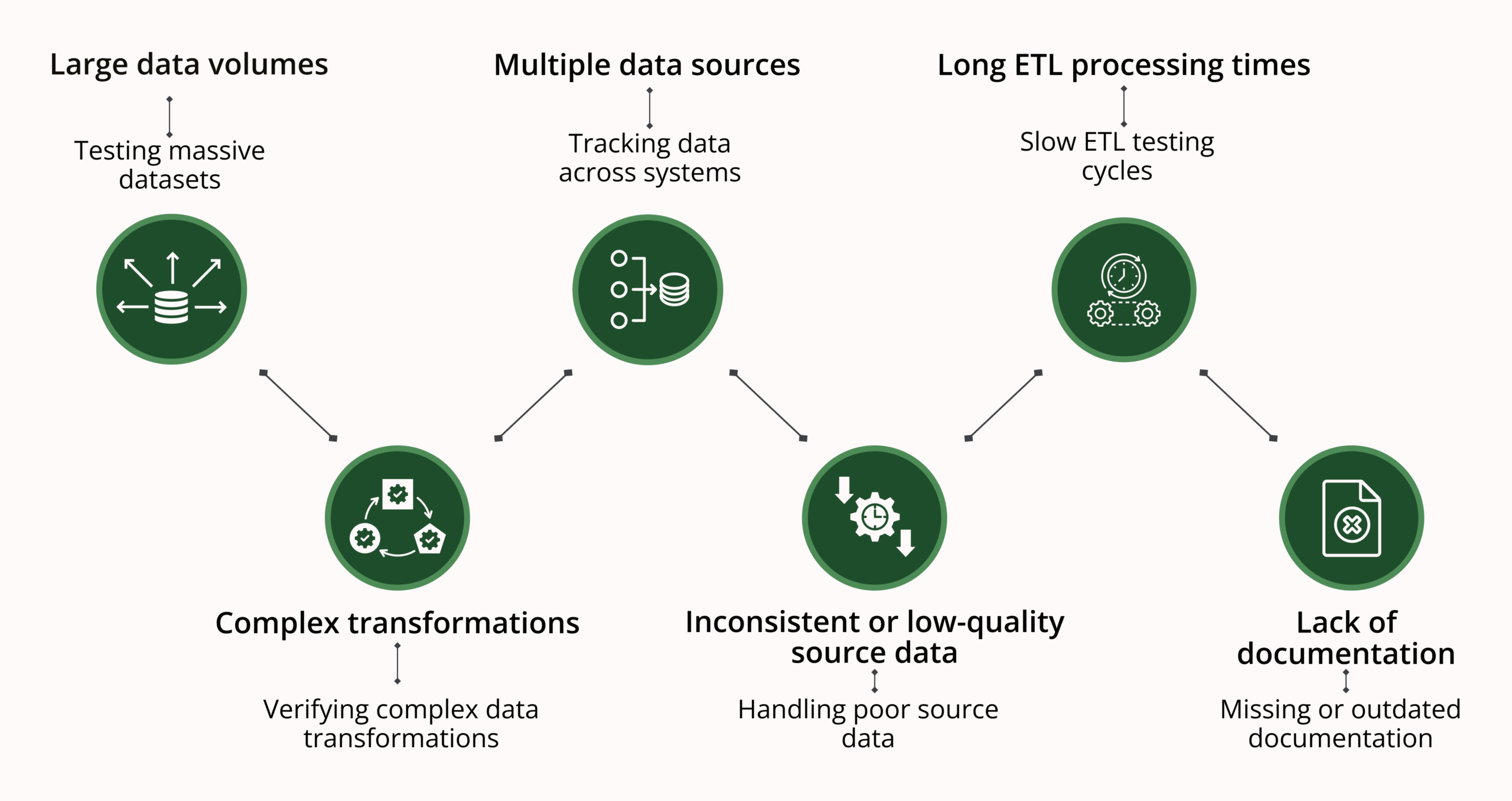

Data warehouse testing comes with its own set of practical challenges. The main ones usually include:

- Large data volumes. Often, data warehouses hold very large datasets. Checking vast amounts of data can be time-consuming and needs effective queries and tools.

- Complex transformations. Typically, data undergoes many changes, calculations, and combinations. It can be challenging to ensure that all business rules are applied properly.

- Multiple data sources. Often, data is received from a variety of systems that have different formats and structures. Tracking the data across these sources can take much time.

- Inconsistent or low-quality source data. Systems of origin can sometimes have values that are missing or not correct. During data warehouse testing, these problems must be considered while still making sure the data is processed correctly.

- Long ETL processing times. ETL tasks can require many hours to finish. This makes testing slow, and it is more difficult to confirm fixes rapidly.

- Lack of documentation. Finally, the documentation may not be fully complete, or it can be outdated. Because of this, those who test may have to understand data logic and mappings by themselves.

Data Warehouse Testing Tools

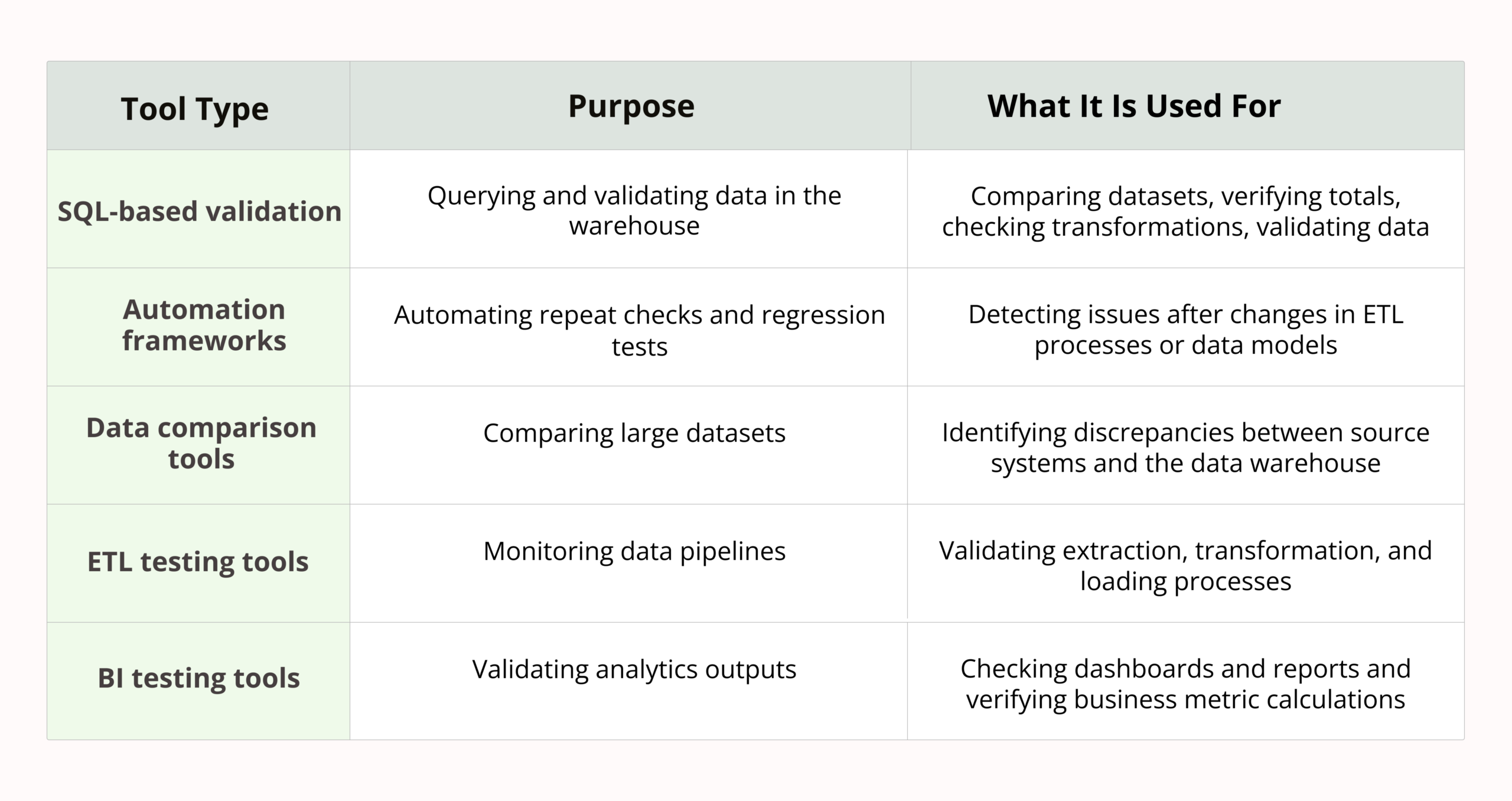

Many QA teams employ various DWH testing tools for querying data, making checks automatic, and validating outcomes:

- SQL-based validation. SQL is the primary tool for testing data warehouse. Testers make use of queries to compare datasets, verify totals, check transformations, and validate data directly in the warehouse.

- Automation frameworks. Automation assists in performing repeat checks and regression tests. It is simpler to identify problems after alterations in ETL processes or data models when using automated tests.

- Data comparison tools. These instruments assist in comparing extensive datasets between source systems and the data warehouse, which makes it simpler to identify discrepancies.

- ETL testing tools. Specialized tools help monitor data pipelines and validate extraction, transformation, and loading processes.

- BI testing tools. These toolsare employed to check dashboards and reports, ensuring that business measures are computed in the right way.

Best Practices for Testing Data Warehouses

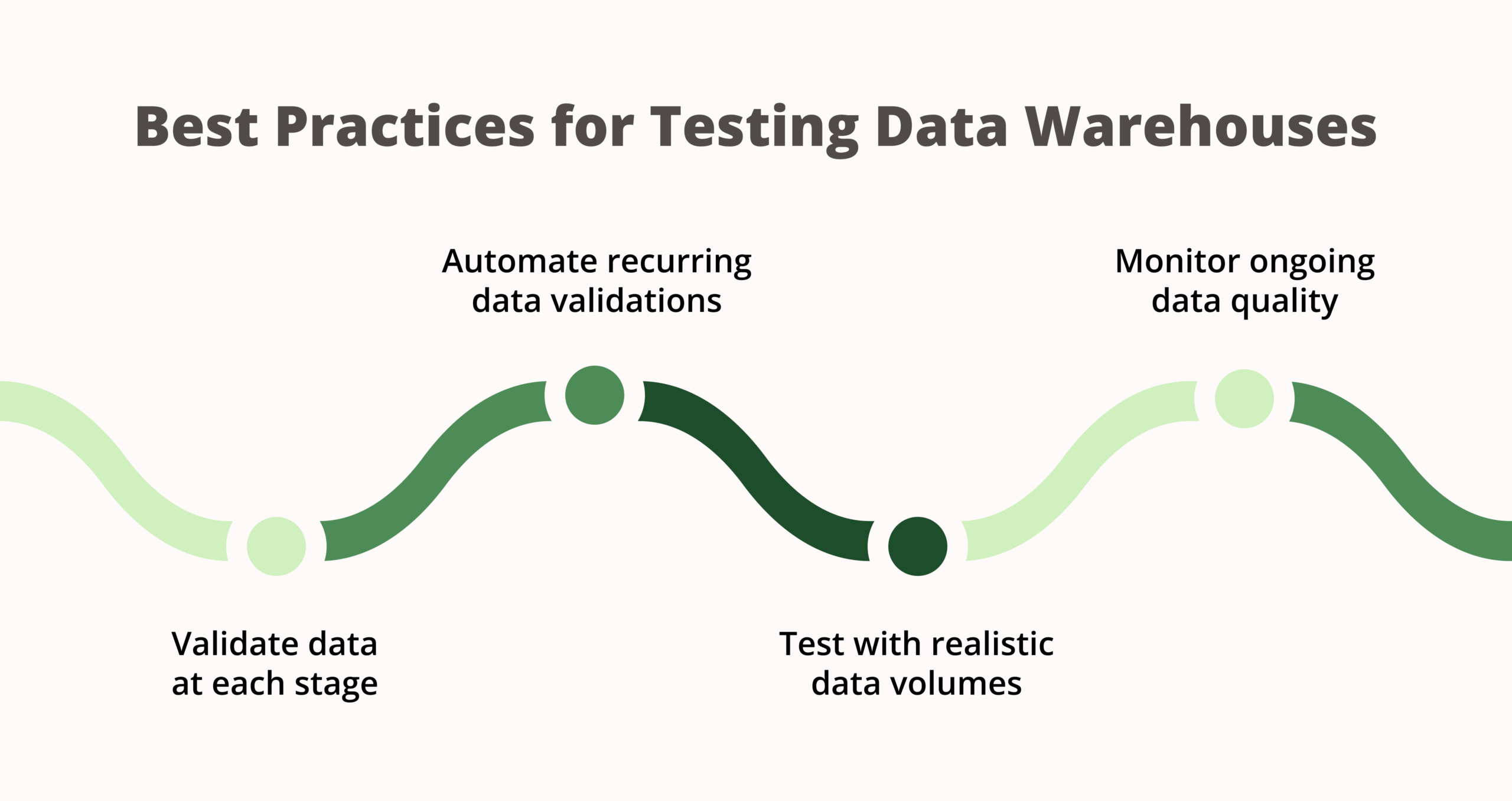

Some useful methods can make data warehouse testing a lot more effective and easy to maintain as time goes on:

- Validate data at each stage of the pipeline. Do not wait until data arrives to final reports. By inspecting data during extraction, transformation, and loading stages, it is simpler to identify problems early on.

- Use automation whenever possible. A lot of data checks need to be done on a regular basis. If you automate the validations, it saves time and makes regression testing more dependable.

- Test with realistic data volumes. If you only use small datasets for data warehouse testing, it is possible some issues can be hidden that may appear when using real production data. By utilizing realistic data, it aids in exposing performance and quality problems of the data at an early stage.

- Maintain clear data mapping documentation. When the details of mappings and transformation rules are plainly documented, it makes testing simpler and lessens misunderstandings when looking into problems.

- Implement data quality monitoring. Ongoing monitoring helps detect any problems with data at an early stage, even when all testing is finished. This maintains the dependability of the data warehouse.

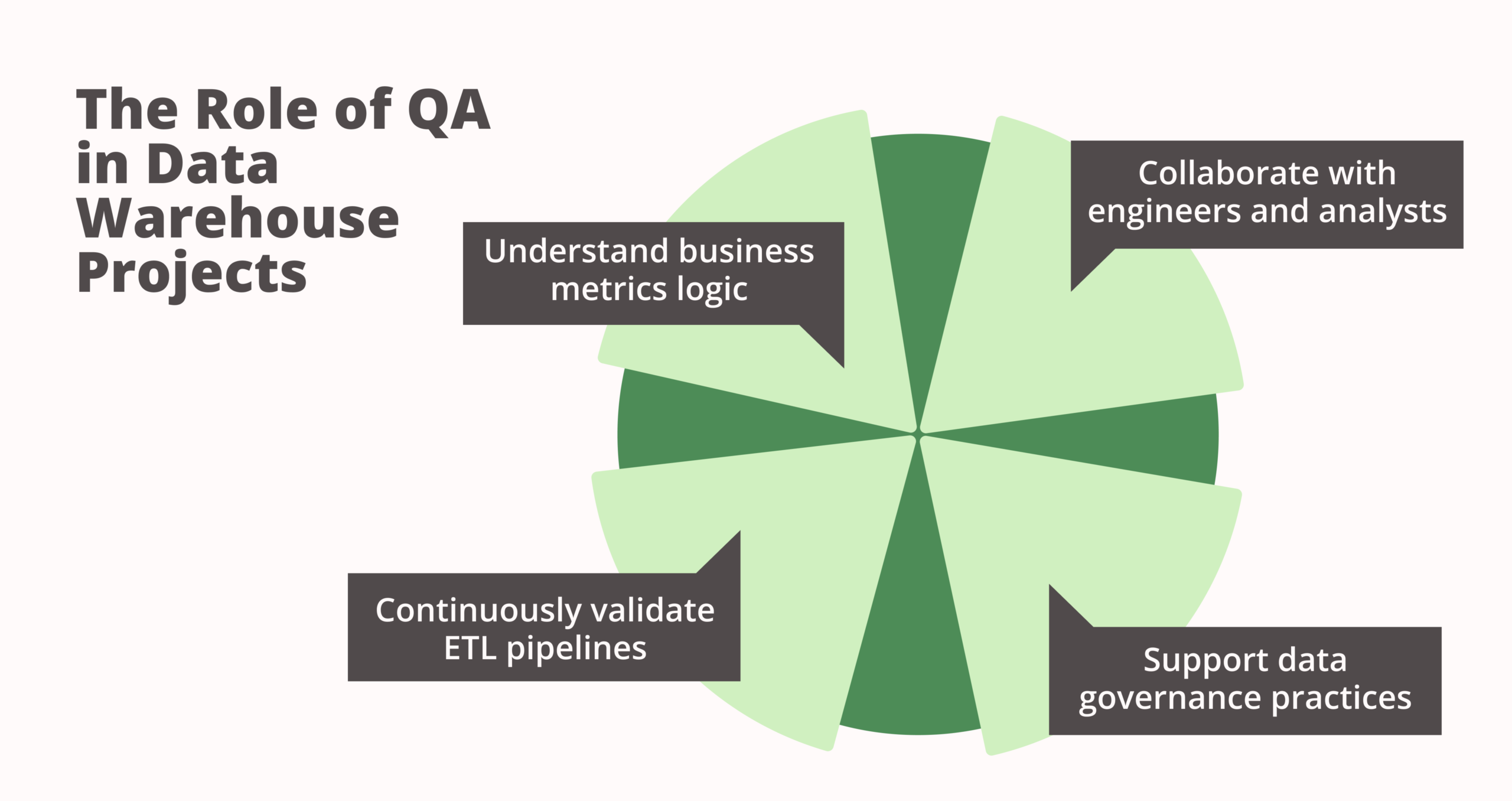

The Role of QA in Data Warehouse Projects

Quality assurance is very significant in data warehouse projects. QA teams help ensure the reliability of data when systems evolve and change, often collaborating closely with both technical and business teams.

- Working with data engineers and analysts. Usually, data warehouse testing involves working together closely. To understand pipelines, QA engineers join forces with data engineers while they team up with analysts to ensure that reports show the expected logic.

- Understanding business logic behind reports. Testing data is more than just about matching tables. QA teams must comprehend how business metrics are formed and what these numbers should signify.

- Continuous validation of ETL pipelines. Data pipelines often change when new sources and transformations are included. QA assists in making sure these changes do not disrupt existing data flows or reports.

- Supporting data governance initiatives. QA teams usually play a part in efforts for data quality and management by aiding in setting validation rules, enhancing paperwork, and advocating uniform practices for data.

Conclusion

Testing the data warehouse is helpful for ensuring the numbers on which people depend are truly right. As warehouse data is important for reports and dashboards, minor problems can cause much confusion. Regular testing helps maintain the accuracy and consistency of the data when there are changes in systems.

When testing takes too much time or becomes very complicated, it can be beneficial to hire outside help. We at White Test Lab can assist in establishing the right ways of checking and maintaining good data quality while your own team works on development and analysis work.